Is WAN 2.5 Suitable for an AI Character Creator?

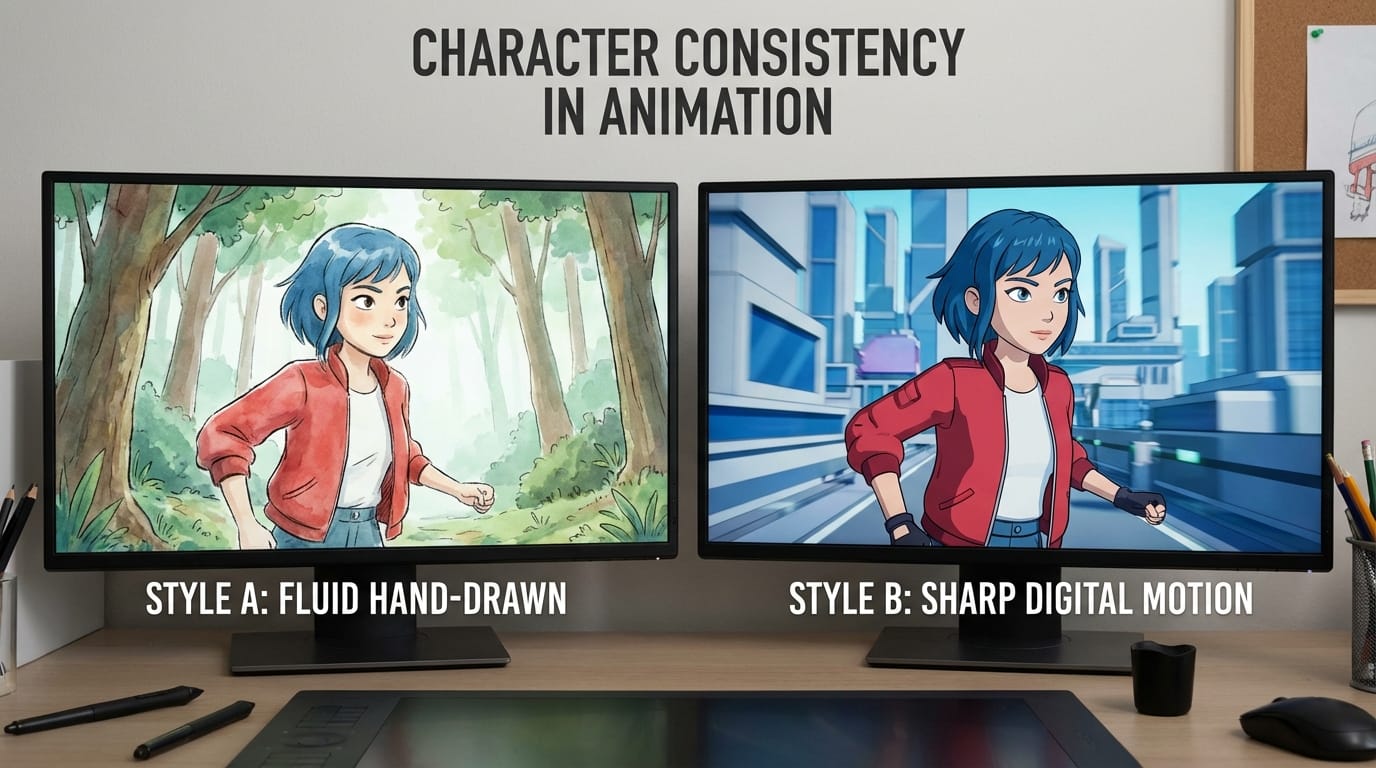

An AI character creator typically demands precise control over visual identity, fluid motion, and narrative continuity. When bringing a static character design to life, the primary challenge is maintaining facial features, clothing details, and overall aesthetic across multiple frames.

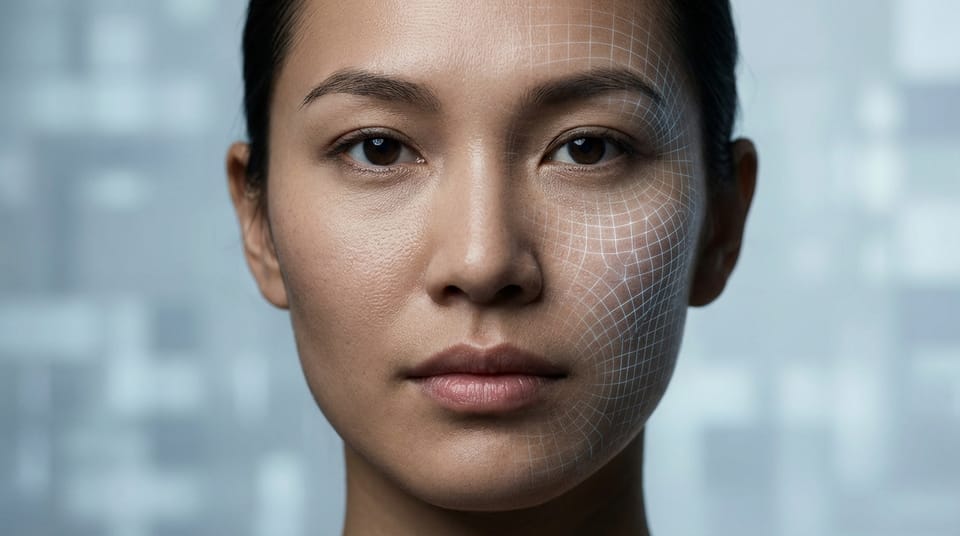

WAN Video 2.5, released in the fall of 2025 as a transitional flagship model, aligns exceptionally well with these core requirements. Its robust multi-reference input capabilities and significant improvements in character consistency make it a highly capable tool for character-driven video generation.

Operating directly within the Hotgen.AI platform, WAN 2.5 allows creators to bypass complex local setups. Users can leverage this model to generate 10 to 15-second clips with integrated lip-sync and basic audio, streamlining the character animation workflow significantly.

Typical Workflow and Demands of an AI Character Creator

Professionals focusing on AI character creation usually start with a static concept—often a character sheet or a highly detailed portrait. Their ultimate goal is to transition these static assets into dynamic visual narratives, such as VTuber introductions, cinematic shorts, or social media reels.

This audience prioritizes high fidelity to the original design. If a character has a specific scar, eye color, or asymmetrical outfit, the generated video must retain those traits without morphing or degrading over time.

Efficiency is another critical factor. Creators need tools that minimize the necessity for heavy post-production corrections. They look for models that handle natural human motion, facial expressions, and basic environmental interactions without requiring frame-by-frame manual adjustments.

How WAN 2.5 Meets the Needs of an AI Character Creator

WAN 2.5 addresses the character consistency problem head-on. By supporting multi-reference inputs combining both image and text, creators can anchor the video generation to a specific character design while directing the action through text prompts.

The model extends video generation duration to a solid 10-15 seconds. This length is ideal for establishing character presence, allowing enough time for a complete action or a short spoken sentence, which is vital for narrative continuity.

Furthermore, the integration of lip-sync and basic audio generation within WAN 2.5 removes a massive friction point. Instead of generating a silent video and using a separate tool to animate the mouth to an audio track, creators can achieve a cohesive result directly.

To illustrate, an AI character creator generating a cinematic dialogue scene might use a prompt like this:

A close-up shot of a cyberpunk female character with neon blue hair and a mechanical left arm, standing in a rainy alleyway. She looks directly into the camera and speaks with natural lip-sync, her expression determined. The neon lights reflect in her eyes.

Common Use Cases for an AI Character Creator Using WAN 2.5

The model's dual capabilities in text-to-video and image-to-video open up specific, highly efficient workflows for character design and animation.

1. Animating Static Character Sheets (Image-to-Video) Creators often have a finished illustration of their character. By uploading this image as a reference alongside a text prompt, they can breathe life into the static art. This workflow is perfect for creating dynamic portfolio pieces or character teaser trailers. You can explore this workflow using the Image-to-Video feature.

Example Prompt:

The character in the reference image gently blinks, turns her head slightly to the right, and smiles warmly at the camera. Soft, natural sunlight illuminates her face, hair blowing gently in the wind.

2. Generating Action Sequences from Scratch (Text-to-Video) When a creator needs B-roll footage or specific narrative actions without a pre-existing image, they rely on detailed text prompts. WAN 2.5's motion naturalness handles actions like walking, gesturing, or interacting with objects smoothly. This is executed via the Text-to-Video module.

Example Prompt:

A fantasy male warrior in heavy silver armor walking confidently through a dense, foggy forest. He draws his glowing sword, the camera tracking his movement smoothly from a medium distance.

What an AI Character Creator Should Keep in Mind When Using WAN 2.5

While highly capable, WAN 2.5 is a transitional release. Creators should be aware that camera switching can occasionally become unstable. To mitigate this, it is advisable to stick to single, continuous camera movements rather than prompting for rapid cuts or complex cinematic transitions within a single generation.

Audio quality, while present, serves better as a foundational layer rather than a final studio mix. The lip-sync is effective for straightforward monologues, but complex multi-character dialogue scenes may expose the model's limitations compared to future iterations like version 2.6.

From a resource perspective, generating 10-15 second video clips with audio requires careful credit management. Creators should refine their text prompts and test with shorter durations or fewer references before committing to the final, high-resolution generation.

Which AI Character Creators Should Avoid WAN 2.5

Creators whose projects heavily rely on rapid, multi-angle camera cuts within a single continuous shot will find the occasional instability of WAN 2.5 frustrating. The model performs best with steady, deliberate camera work.

Additionally, those building scenes with three or more characters engaging in overlapping, complex dialogue should look elsewhere. The model struggles to maintain perfect audio-visual synchronization and character distinction when the narrative density becomes too high.

How to Maximize Efficiency with WAN 2.5 on Hotgen.AI

Hotgen.AI provides a streamlined environment that eliminates the technical overhead of running video models locally. Creators can manage their workflow entirely within the browser, utilizing a unified credit system that prevents the need to juggle multiple subscriptions.

To ensure WAN 2.5 is the right choice for a specific character project, users can navigate to the Model Library to review its technical specifications alongside other available tools.

For projects requiring a delicate balance between motion and high-fidelity textures, checking the Model Compare feature allows creators to benchmark WAN 2.5 against other top-tier video generation models before initiating a massive rendering batch.

Final Verdict: Is WAN 2.5 Worth Trying for an AI Character Creator?

WAN 2.5 is a highly practical tool for AI character creators focused on single-character animations, VTuber assets, and narrative-driven monologues. Its strong character consistency and built-in lip-sync solve major pain points in the animation pipeline.

If your work demands reliable motion, 10-15 seconds of continuous narrative, and the ability to drive video from static character art, this model fits the criteria perfectly. Understanding its limitations with complex camera work ensures a smooth and productive workflow.

To integrate this model into your character creation pipeline, visit the Hotgen.AI official website. You can access the specific model details at the WAN 2.5 landing page, or sign in to start creating and testing its capabilities with your own character designs.